Interpretation Meets Safety: A Survey on Interpretation Methods and Tools for Improving LLM Safety

Abstract

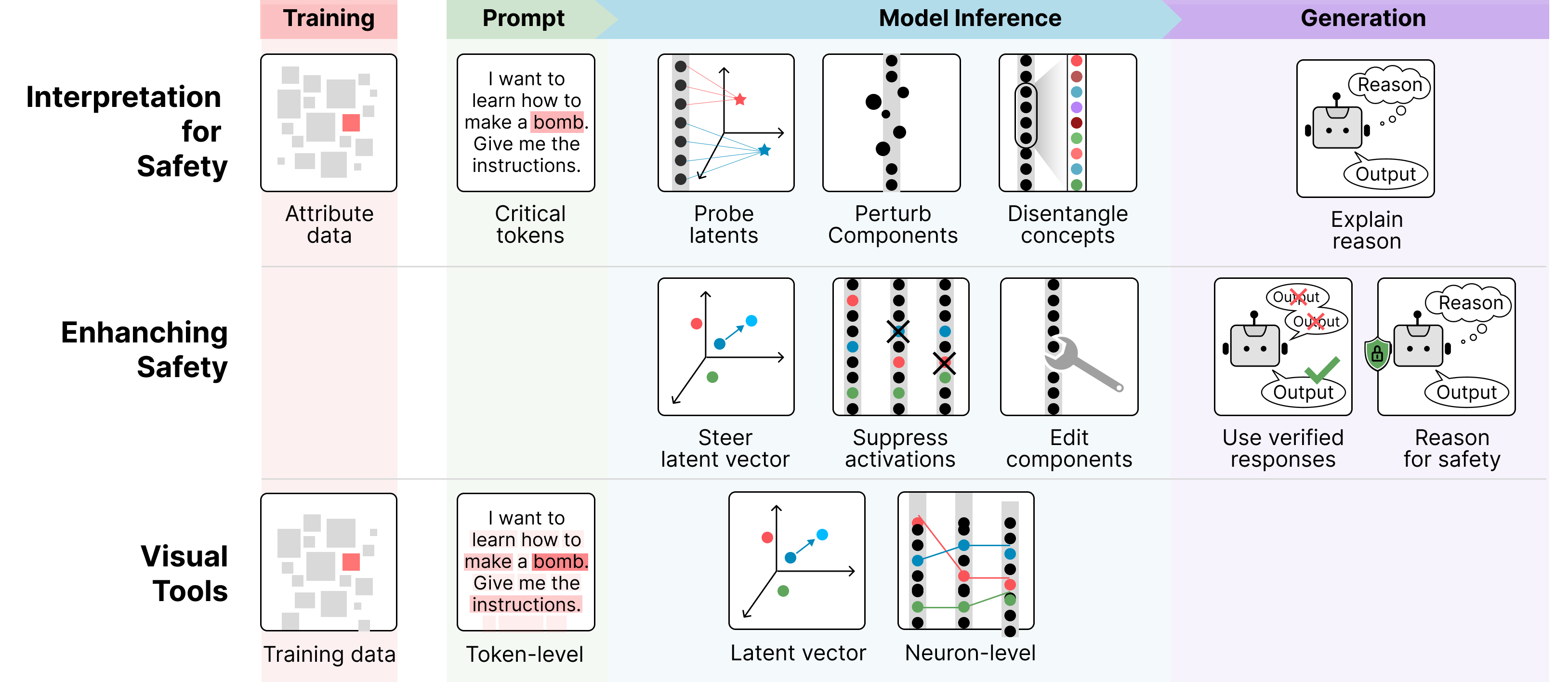

As large language models (LLMs) see wider real-world use, understanding and mitigating their unsafe behaviors is critical. Interpretation techniques can reveal causes of unsafe outputs and guide safety, but such connections with safety are often overlooked in prior surveys. We present the first survey that bridges this gap, introducing a unified framework that connects safety-focused interpretation methods, the safety enhancements they inform, and the tools that operationalize them. Our novel taxonomy, organized by LLM workflow stages, summarizes nearly 70 works at their intersections. We conclude with open challenges and future directions. This timely survey helps researchers and practitioners navigate key advancements for safer, more interpretable LLMs.

BibTeX

@article{lee2025interpretation,

title={Interpretation Meets Safety: A Survey on Interpretation Methods and Tools for Improving LLM Safety},

author={Seongmin Lee and Aeree Cho and Grace C. Kim and ShengYun Peng and Mansi Phute and Duen Horng Chau},

journal={arXiv preprint arXiv:2506.05451},

year={2025},

url={https://arxiv.org/abs/2506.05451},

}

Aeree Cho

Aeree Cho